Where have the fun architectures of the arcade gone?

⏲ 6 min

I loved reading about arcade boards, how they worked and the madness designers embraced to make arcades run. A lot of people remember arcades for the games, me too, but I was equally intrigued by the hardware. When I was a kid, the nearest pub to my childhood home had a cabinet of Cruis'n USA. I thought it was an old PC with a CRT and a steering wheel. Nope, it was a Midway V unit built around a Texas Instruments TMS32031 DSP + CPU combo with a few VLSIs for glue. Not your standard PC CPU from Intel or AMD.

Now take any newer arcade board and I bet you'll find Windows Embedded/LTSC with a common x86_64 CPU.

What happened to these cool architectures?

After the electromechanical arcades of the '70s, the arcade industry shifted to pure electronics. It was an arms race to impress players around the world. Personal computers and home consoles were not common and the only way to play something interesting was the arcade.

For years, the arcade was the only way to see raw computational power. To make you feel a sense of speed like Sega's Hang-On or Space Harrier, you needed two Motorola 68000s and SIXTEEN graphic coprocessors, just to get speedy sprite scrolling with scaling and rotations. I could also tell you about more boards, like Namco's NB-1: four graphics chips with three motion coprocessors. Pre-2000 solutions were crazy: custom processors, DSPs and a hefty amount of RAM.

Then the arcade fell off, slowly but surely, with more powerful consoles and PCs. Console-based arcades made their entry and became the norm for major manufacturers. Sure, PlayStation 1 and 3DO hardware existed before this shift, but most used custom hardware to handle the game. A retrofitted console was enough, no more parallelization, no more custom ASICs for the target hardware. Why would you spend millions designing and debugging a custom board? Why would you build a custom SDK for your own platform?

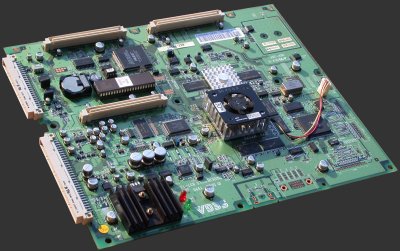

Call me dramatic, but the white flag waved at the rising tide of cheap, powerful and boring PC hardware in the 2000s. Sega launched their Lindbergh platform based on a Pentium 4 and a GeForce 6 GPU. More manufacturers followed: Taito with the Type X, Konami with the Bemani PC platform, to name a few. Custom hardware started to fade. SH-4, MIPS or console-derived architectures still existed, sure, but such systems became harder to find. No more sprawling motherboards with weird chips you can't identify without reverse engineering. Just a PC with FPGAs/MCUs to handle the I/O. The only truly custom parts, if you squint hard, are the DRM systems with lockouts or dongles.

At least it makes the MAME Team's life a bit easier, even if hardware and money donations are rare. Now that the game is a Windows/Linux app, you "only" need to defeat the DRM and make wrappers to handle the custom inputs or fix problems (TeknoParrot, Spice2x).

So yes, we know why exotic architectures died. Custom silicon is a billion-dollar business. The PS2's Emotion Engine cost millions for Sony. Namco, Taito, Konami and others could not justify that cost for a few thousand units. Meanwhile, Nvidia, AMD and Intel (hopefully) amortize their R&D costs across the datacenter and consumer PCs.

The economy is a steamroller, heh?

I think the worst part is that we'll never get it back. The talent is gone. The engineers who lived for this kind of stuff have retired or moved to other companies. Now the hardware lore is rotting away in landfills or if you're lucky, on eBay auctions.

The PC won and in doing so, it may have erased an ocean of alien architectures along the way. Now it's x86_64, DirectX/Vulkan and Windows. If you want some action, the embedded systems world might interest you.

See you next time.